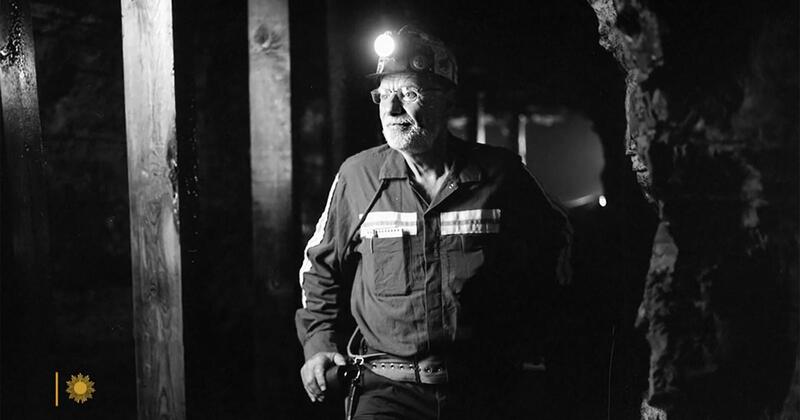

There's a chapter in Michael Lewis's latest book, Who Is Government?, about a man called Chris Mark. He spent his career at the US Mine Safety and Health Administration trying to stop coal mine roofs from falling on people's heads. It is, on the face of it, the least charity-sector story imaginable. Bear with me.

In 2016, for the first time in the recorded history of American coal mining, not a single underground coal miner was killed by a roof fall. Zero. Fifty years earlier, roof falls had killed close to a hundred miners a year and were the single biggest cause of death in what was then the most dangerous job in the country. That change didn't come from better helmets or tougher enforcement or a rousing speech. It came, in large part, from one engineer quietly refusing to accept the data — or the forces — that his field thought were the important ones.

I think there's something in this for those of us who work on impact in the charity sector. Quite a lot, actually.

The question Chris Mark asked that nobody else was asking

Mark started out as an actual coal miner in West Virginia in the 1970s, then went back and did a PhD in mining engineering. When he landed at the (then) Bureau of Mines, he noticed something odd. The research the Bureau funded was mostly about keeping mines productive and stable — the kind of thing the industry wanted to know. It was not, particularly, about the things that were killing miners.

So he asked a deceptively simple question: what's actually killing people?

Not "what does the industry think is important." Not "what's easy to measure." Not "what's in the current research programme." What is actually causing the harm we say we exist to prevent.

If you work in impact reporting, you will recognise this question as the one almost nobody is asking. We measure what funders ask for. We measure what our CRM happens to capture. We measure the outputs that look good in an annual report. We measure the things our delivery team is already counting because they have to count them anyway. And then we write a theory of change that makes all of that look intentional.

The Chris Mark move is to stop, look up from the spreadsheet, and ask: of all the ways our beneficiaries' lives can go wrong, which ones are actually going wrong, and how often, and to whom? And then — and this is the hard bit — are we measuring those things, or are we measuring the things that were convenient to measure?

Statistics in a field that didn't speak statistics

The second thing Mark did was unusual for his profession. He treated mine safety as a statistical problem rather than an engineering one.

There's a line he wrote later that I love: the language of statistics, he said, is foreign to rock engineers, who are trained to see the world in terms of load and deformation, where failure is simply a matter of stress exceeding strength. In other words, engineers thought about roof collapses deterministically. The roof either holds or it doesn't. You calculate the forces, you specify the supports, you move on.

Mark's insight was that this was the wrong mental model. Predicting a roof collapse, he said, was less like evaluating a suspension bridge and more like predicting how a baseball player will hit next season. No matter what you did, you were going to be wrong some of the time. The best you could do was improve the odds. And the way to improve the odds was to collect a lot of data from roof failures and look for patterns.

Charity impact reporting has exactly the same problem, inverted. We are surrounded by outcomes that are genuinely probabilistic — whether a young person stays in education, whether a family avoids crisis, whether a community's health improves — and we insist on reporting them deterministically. We pick a beneficiary, we tell their story, we imply the intervention caused the outcome, we move on. The case study is the load-and-deformation model of the charity sector. It treats each individual as a little bridge that either held or didn't.

What Chris Mark would do, if he worked at a charity, is refuse to talk about individual cases until he'd built a dataset of thousands of them and gone looking for the patterns. Which interventions, in which conditions, for which people, shift the odds by how much? That's a different kind of question, and it produces a different kind of answer, and most charities are not set up to ask it.

He widened what counted as data

Here's the bit I find most interesting, and the bit I think the sector most needs to hear. Mark didn't just do better statistics on the data his field already collected. He systematically expanded what counted as data in the first place — in three directions at once.

He widened the outcome. His peers counted fatalities. Mark counted fatalities, non-fatal injuries, and reportable roof falls that hadn't injured anyone at all. He has a paper titled, almost literally, What Reportable Noninjury Roof Falls in Underground Coal Mines Can Tell Us — and the answer is: an enormous amount, because there are vastly more near-misses than deaths, and the near-misses contain most of the signal about what conditions precede a collapse. If you only study the fatal events, you're working from a tiny, skewed sample of a much larger phenomenon.

He widened the forces. Traditional roof engineering focused on vertical stress — the weight of the mountain pressing down on the pillars. Obvious, intuitive, and, it turned out, only half the story. Mark and his colleagues showed that horizontal stress — the sideways tectonic forces squeezing the rock — was often higher than vertical stress in coal-bearing formations, and was causing collapses that the vertical-only model simply couldn't explain. He then developed guidelines for orienting mine tunnels relative to the direction of horizontal stress, which is the sort of intervention that looks trivial on paper and saves lives in practice.

He widened the context. Roofs don't fail in the abstract. They fail in specific geological conditions — bedding planes, moisture sensitivity, the strength of individual rock layers, the quality of the contact between them. Mark developed something called the Coal Mine Roof Rating (CMRR), a scale for characterising the actual rock above a mine, and it's now used globally. The point is subtle but important: you cannot predict whether a given support will hold until you know what kind of roof it's holding up.

Each of these moves looks like a technical improvement. What they actually are is a refusal to accept the inherited definition of the problem. His peers were answering the question given these outcomes, these forces, and these conditions, is this mine safe? Mark kept responding: your outcomes are incomplete, your forces are incomplete, and your conditions are a black box. Let's go and measure them.

The charity sector makes all three of the same narrowings, constantly.

We narrow the outcome. We count the people who completed the programme and hit the headline metric, and we don't count the near-misses — the people who almost engaged, the people for whom the intervention nearly worked, the people who got worse in ways adjacent to but not captured by our outcome framework. These cases contain most of the signal about what actually drives change, and we throw almost all of it away.

We narrow the forces. Most theories of change model one mechanism: the thing the charity does, and the outcome it's meant to produce. The sideways forces — the benefits system, the housing market, the school's capacity, the parent's mental health, the local labour market — are treated as context, or noise, or somebody else's problem. But often they are the dominant force, the one doing most of the work on the outcome, and the charity's intervention is a smaller vertical load on top of a much larger horizontal one. If you don't measure the horizontal forces, you will systematically misattribute what's happening in your data.

We narrow the context. We treat beneficiaries as interchangeable units and assume the intervention works the same way regardless of the specific conditions of the person's life. Chris Mark would say: of course it doesn't. The roof rating matters. Show me the rock.

Collect the failure data, including the near-misses

The widening of outcomes is the point that hits hardest for me, so it's worth staying on for a moment. Ask yourself, honestly: does your charity collect data on the people you failed? The ones who dropped out of the programme, the ones for whom the intervention didn't land, the ones who ended up in the situation the intervention was meant to prevent? And — the harder question — do you collect data on the near-misses? The people who almost didn't make it, the ones who hit a crisis mid-programme, the ones whose outcomes were ambiguous and got rounded up in the report?

Most charities don't. Most charities couldn't, even if they wanted to, because the minute someone stops being a clean success story they also stop being in the data. The reporting pipeline is built for the survivors.

This is the same mistake Abraham Wald caught the US military making in World War II. The military wanted to add armour to bombers in the places where returning planes had the most bullet holes. Wald, a statistician, pointed out that they were only looking at the planes that came back. The armour needed to go where the survivors weren't hit — because the planes hit in those places were the ones that didn't return to be studied. It's one of the cleanest illustrations of survivorship bias ever recorded, and it's structurally identical to the error the charity sector makes every time it builds its impact report from the beneficiaries it successfully served.

If your monitoring pipeline can't see failure, it can't learn. If it can't see near-misses either, it's working from an even smaller slice of reality than it thinks.

Why this had to be the federal government

There's one more bit of the Mark story that I think the sector needs to hear, and it's uncomfortable.

Mark himself has said this work had to happen inside the federal government, not in private industry. His reasoning was simple: if a private coal company had worked out a better way to design mine pillars, they would have kept it proprietary, because it would have saved them money and given them an edge. The federal government's job was the opposite — to take whatever worked and diffuse it as widely and as fast as possible, because the point wasn't competitive advantage, it was fewer dead miners.

The charity sector tells itself it operates on the federal-government logic. We are, supposedly, collaborative, mission-driven, in it for the beneficiaries, happy to share what works. In practice, we operate much closer to the coal-company logic. Our impact data is a fundraising asset. Our methodologies are differentiators. Our "learnings" get published only once they've been safely repackaged as a success story for the next bid. The stuff that would actually help another charity do its job better — the failure data, the null results, the honest account of what didn't work and why — stays locked in the building.

If we took Chris Mark seriously, we would be pooling outcome data across organisations working on the same problem, publishing the things that didn't work, and treating methodological improvements as public goods rather than competitive moats. Some corners of the sector do this. Most don't.

What this looks like in practice

I don't want to end on a sermon, so let me try to make this concrete. If you're building an impact strategy and you want to steal from Chris Mark, here's what I think it looks like:

- Start with the harm, not the activity. Before you design the measurement framework, write down — in plain language, with actual numbers where you can get them — what bad thing is happening to your beneficiaries, how often, and to whom. Then ask whether your current metrics tell you anything about that bad thing, or whether they just tell you about your own activity levels.

- Widen the outcome. Count the near-misses as well as the hits and the clean failures. Build the monitoring pipeline so that ambiguous, partial, and negative outcomes are still visible in the data. Most of the signal lives there.

- Widen the forces. Model the horizontal stresses — the housing, the benefits, the labour market, the school, the family — as variables in your theory of change, not as background noise. If a sideways force is doing more of the work than your intervention, you need to know that. If it's not, you need to know that too.

- Widen the context. Stop treating beneficiaries as interchangeable. Characterise the actual conditions of the people you work with in enough detail that you can ask which interventions work for whom. That's the charity-sector equivalent of Mark's roof rating, and it's the thing that turns an impact report into something you can actually learn from.

- Think probabilistically. Stop asking "did this person's life improve" and start asking "by how much did we shift the odds, for people like this, in conditions like these." It's a harder question. It needs more data and more honesty about uncertainty. It's also the only question whose answer compounds over time.

- Treat methodology as a public good. When you find something that works, publish it in enough detail that another charity could copy it. When you find something that doesn't work, publish that too. The sector's roof is falling in on a lot of people. Hoarding the blueprints doesn't help.

Chris Mark spent forty years on pillars and roof bolts and stability factors and horizontal stress and rock ratings, and the payoff was a year in which nobody died. It's not a glamorous story. It's a story about patient quantitative work, asking unfashionable questions, and refusing to accept the inherited definition of the problem. The charity sector could use a few more people like that, and a few more leaders willing to give them the room to do the work.

The first step is the one Mark took in his first week on the job. Look up from the spreadsheet and ask what's actually killing people — and then ask whether you're even measuring the right things to find out.